- Crawl, Rank, Repeat.

- Posts

- The DaSilva Download – February Edition

The DaSilva Download – February Edition

What’s On My Mind This Month?

I’m Mellllllting…

Hi friends,

It’s been -20 to -30 here for what feels like an eternity.

Today it was -1. The sun hit my face and I swear I felt my brain cells defrost in real time.

Which is perfect timing, because I have so much to talk about this month!

I’ve been deep in AI visibility experiments, quality rater audits, robot cowboys, and one of the most delightfully meaningless sports I’ve ever seen… anything to stop me from reading the news.

Let’s get into it.

🥓 Good News: Competitive Skillet Curling Is a Thing

Source: NPR

Honestly, can the internet JUST be this?

In Chattanooga, Tennessee, 32 teams gathered for the Annual Skillet Curling Championship. Imagine Olympic curling… but instead of granite stones, you’re hurling cast iron skillets across the ice. And instead of a bullseye, you’re aiming for “the bacon” — a grill press tossed down the rink before each round.

This is a real thing!

Teams have names like Wu-Tang Pans, Natural Born Curlers, and Rock, Paper, Skillets. Some competitors throw their skillets upside down (called a “turtle”). Others crouch, kneel, or basically yeet iron across the rink and hope for the best. It’s part skill, part luck, part absolute nonsense. The event has raised $90,000 this year alone for the Chattanooga Area Food Bank. It’s free to attend. There are costumes. There’s a taco bar awards night. There are hardware-store brooms that “don’t do anything other than make the team feel like they’re doing something.”

One of the competitors described it as “completely meaningless.” chef’s kiss

🧪 Tip of the Month: Audit Like a Google Quality Rater

Dan Hickey shared a smart workflow on LinkedIn that I’ve started using — and it’s actually very solid. The idea: use Google’s Antigravity (their agentic development platform) to simulate a Quality Rater review of your site.

You essentially prompt it to act as a “Quality Rater Guidelines Agent,” then have it open Chrome, review the SERP, analyze your page, and identify gaps against what Google says it values. It’s a surprisingly practical way to pressure test your content through the lens Google trains humans to use. If you’ve never looked at your site the way a Quality Rater would, this is a very fast way to start.

Here’s an excerpt from his Linkedin Post:

Here is the exact workflow we use to give an AI agent the "brain" of a Quality Rater:

1. Build The Knowledge Base First. Grab a markdown version of Google's 168-page Quality Rater Guidelines on GitHub. This is the source of truth. Now the model knows exactly what "Needs Met," "YMYL," and "E-E-A-T" actually mean.

2. Ask the Gemini 3 Pro powered Agent in Antigravity to digest that file and convert it into a strict, step-by-step auditing checklist.

3. Give the agent a keyword (e.g., "Digital PR Agencies") and your landing page URL. Then, tell it to open Chrome, navigate to Google, and review the SERP and your page against that checklist.

4. Ask it to create a markdown file with it's review of your site for that keyword using the quality raters guide & checklist.

This is where it gets crazy.

- Antigravity opens a real Chrome browser instance. It navigates to Google and performs the search.

- It analyzes the SERP layout to understand the user intent. It clicks through to your page in the SERP.

- It scrolls, reads the footer, checks your "About" page, and evaluates your content depth.

- It then creates the markdown file with a review of your page with scores from the Quality Raters Guidelines metric.

It even creates a video of the action its takes in Chrome so you can watch it perform the analysis.

You get a full report detailing exactly where you passed and where you failed the "Needs Met" criteria.

That’s important. You are getting feedback with the same lens that Google applies when assessing SERPs and it does so by understanding the rules of the game.

Pro Tip: Don't just audit yourself. Ask Antigravity to run the same review on the #1 ranking page in your niche.

- Compare the reports side-by-side.

- Do they have better Authorship signals?

- Is their Main Content more comprehensive?

Does their navigation make "Needs Met" at a faster or better score?👀 What I’m Keeping an Eye On

🎬 Anthropic vs. ChatGPT (Super Bowl Edition) Anthropic ran a series of Super Bowl ads that said: “Ads are coming to AI. But not to Claude.” Which is bold, considering if they ever introduce ads, the internet will never let them forget it. What I find more interesting though is their “constitution” work. They’re trying to explain the values behind the product. Not just what it does, but what it stands for. That’s positioning. And in AI right now, trust is a brand strategy.

🤠 Robot Cowboys Have Arrived A new startup GrazeMate, run by Sam Rogers, a 19 year old cattle farmer from North Queensland, announced they are using autonomous drones to muster cattle across massive farms through an app on their phone. Apparently they can control up to 2k animals with a single drone. Startup Daily

🧠 AI Slop Is Now Measurable. Kapwing found that roughly 33% of the first 500 YouTube Shorts on a brand-new account fall into what they call “brainrot” — low-quality, bizarre, mass-produced AI videos designed purely to grab attention. So the real question: is this the year platforms clean it up… or the year brands finally decide not to contribute to it? I have my doubts. Kapwing

📻 The Analog Backlash Is Growing. People are intentionally choosing offline hobbies, screen-free Sundays, crafting, landlines. If AI keeps flooding feeds with low-effort content, the human craving for signal is only going to grow. That tension is going to shape marketing this year. CNN

🔍 Is ChatGPT Just a Search Engine?

I tend to agree with this take — I believe in a lot of cases the same SEO principles and foundational work still apply to being found in LLMs. That’s what I’ve been telling my clients, and it’s a big part of why I started building my own AI visibility tool: to better understand how the process actually works, not just guess at it. I wouldn’t go all in on calling GEO a total grift. But I do think it’s being positioned as something bigger than it is. At the end of the day, it’s just a few additional tools in the overall SEO toolbox — not a replacement for the fundamentals. Queryburst

🤖 Apple & Gemini sittin’ in a tree.. Apple’s next-gen AI models are reportedly being built on Google’s Gemini. Apple has partnered with Google before — most notably by making it the default search engine. Now they’re choosing Google at the infrastructure level for AI. When Apple picks a foundation model, they’re signaling who they believe is going to win — or at least who they trust to power the future of their ecosystem. CNBC

🚀 What I’m Focused on Now

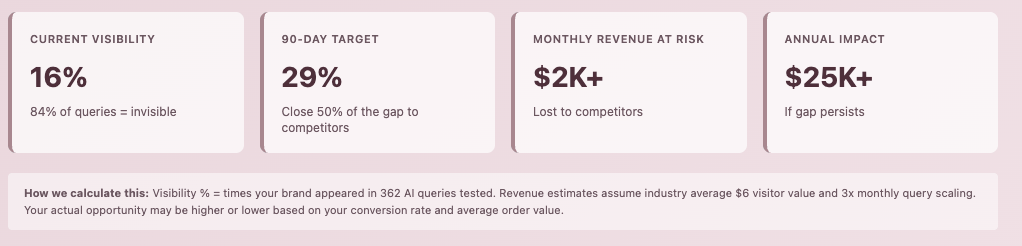

Last month I shared the early version of the AI Visibility Dashboard. This month, it’s evolved. I keep coming back to the same question:

If buyers start discovering products inside AI tools instead of Google… how will we know?

Most reporting today assumes the journey starts with a search engine. But more and more research is happening inside ChatGPT, Claude, Perplexity, Gemini. And right now? Most brands have zero visibility into whether they’re being mentioned, recommended, or misunderstood.

That’s the blind spot I’m trying to close.

The AI Visibility Tracker

Here’s the working hypothesis:

If AI systems are retrieval engines with a synthesis layer, then visibility inside them should be measurable over time.

So the tracker now monitors:

Whether a brand shows up for core category queries

How it’s positioned when it does

Which competitors appear alongside it

Share of voice across tools

Movement month over month

It doesn’t replace SEO. It sits beside it.

Think of it as a new channel we’re starting to measure before it becomes obvious.

Visibility Tracker: Based on 362 AI Queries

What I care about isn’t a single answer. I care about patterns.

Are you consistently associated with your core use case?

Are you showing up as an alternative to the big players?

Are AI tools categorizing you incorrectly?

Those are strategic signals.

The Prompt Generator

Very quickly I realized something: If we want useful data, we need consistent prompts. So I built a structured prompt generator for clients. It standardizes how we test visibility.

Instead of random screenshots, we now run repeatable queries like:

Best tools for [problem] in [industry]

Alternatives to [competitor]

Top [category] software for [use case]

Then we rerun the same structure monthly.

Prompt Generator

The goal isn’t to “hack” AI. It’s to understand how these systems currently see your brand — and feed those learnings back into SEO, content, and positioning.

What I’m Trying to Figure Out

I don’t know yet if this becomes a standard reporting metric in 2026.

But I do know this: If AI visibility starts influencing pipeline quietly, I want my clients ahead of it — not reacting six months later.

Right now volumes are small. But so was organic search once. I’m running this across client accounts to see what patterns emerge. And if the signal is real, we’ll know early.

If This Is On Your Mind…

I’m starting to take on a small number of new SEO + AI Visibility clients this spring.

If you’re curious where you show up inside AI tools — or whether you show up at all — hit reply. I’ll share a snapshot and we can see if it makes sense to dig deeper.

🔥 Vibe Check

Me, every single time I’ve left the house in 2026.

📬 Let’s Talk

If this made you rethink how you’re measuring visibility — or just reminded you that the internet can still be weird and wonderful — send it to someone who needs a smarter feed.

And if you’re curious what your brand looks like inside AI tools right now, you know where to find me.

Talk soon,

Tiffany